Bias-inducing geometries: an exactly solvable data model with fairness implications

Stefano Sarao Mannelli, Federica Gerace, Negar Rostamzadeh, Luca Saglietti

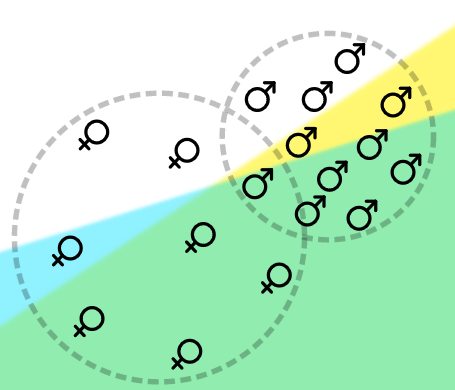

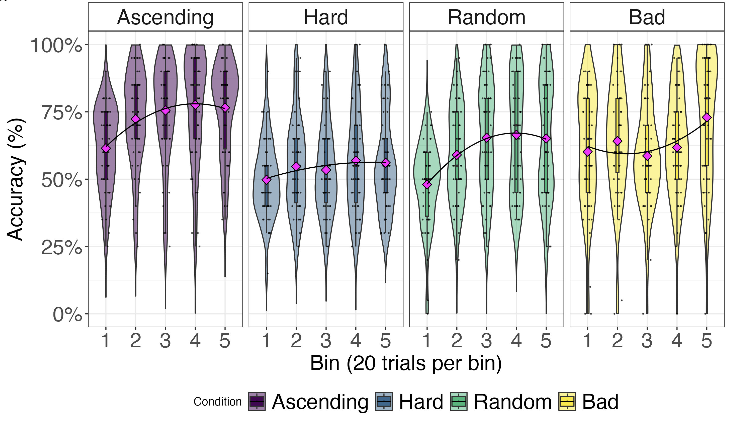

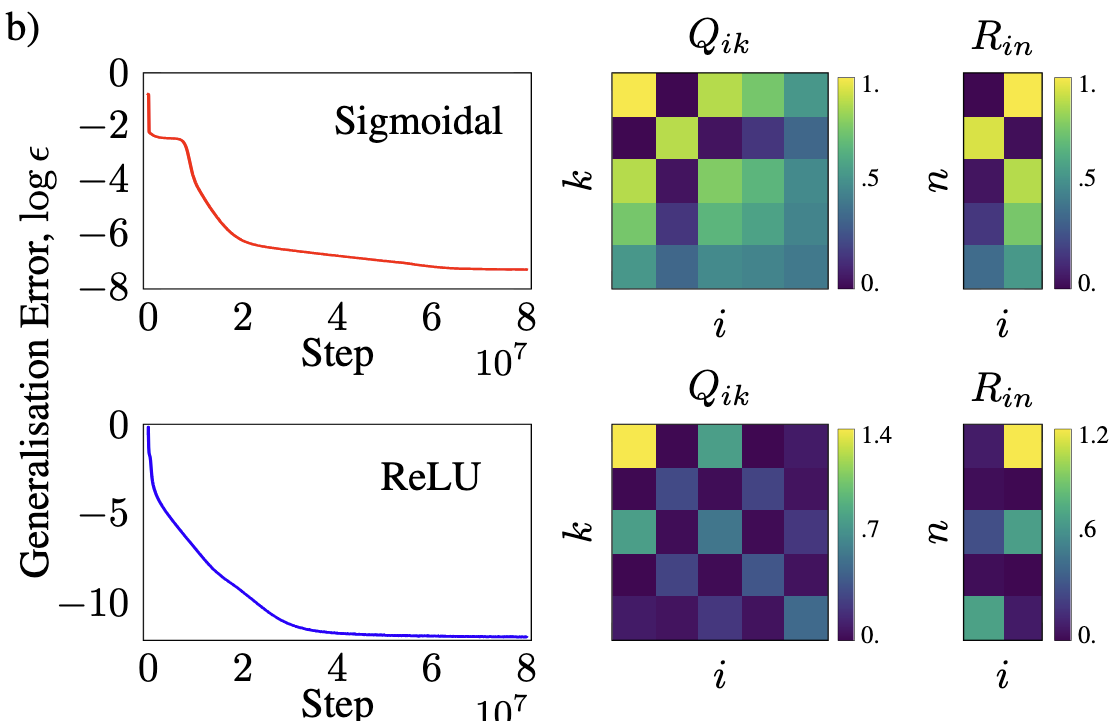

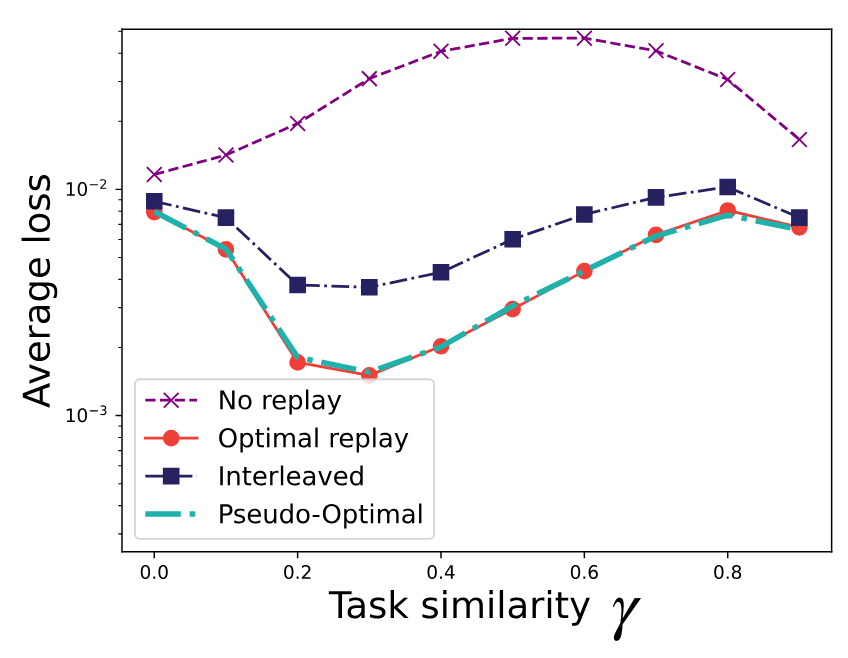

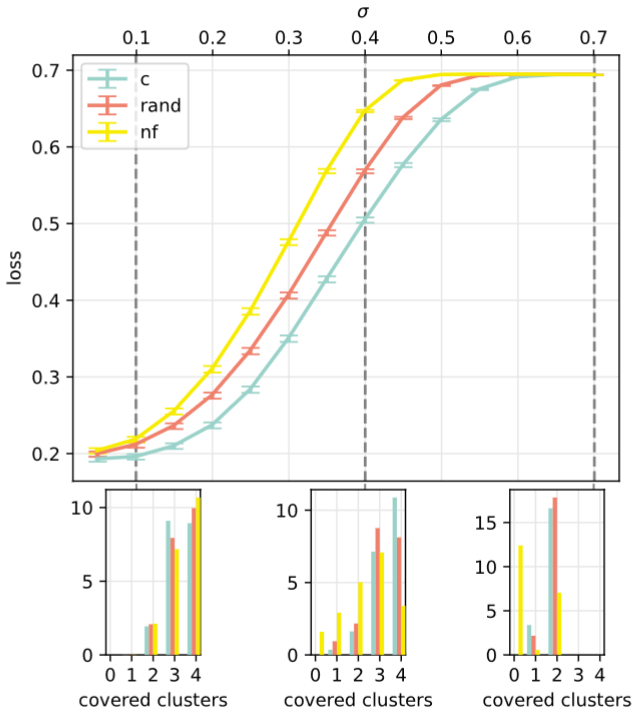

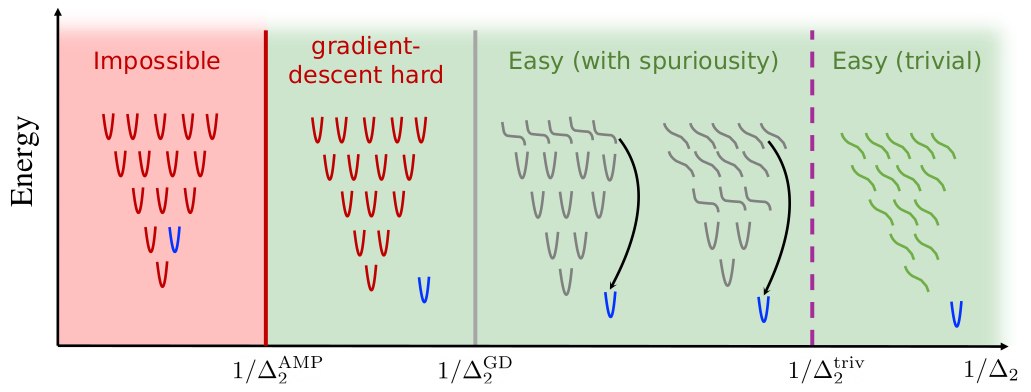

Machine learning (ML) may be oblivious to human bias but it is not immune to its perpetuation. Marginalisation and iniquitous group representation are often traceable in the very data used for training, and may be reflected or even enhanced by the learning models. In the present work, we aim at clarifying the role played by data geometry in the emergence of ML bias. We introduce an exactly solvable high-dimensional model of data imbalance, where parametric control over the many bias-inducing factors allows for an extensive exploration of the bias inheritance mechanism. Through the tools of statistical physics, we analytically characterise...

[Read Article]